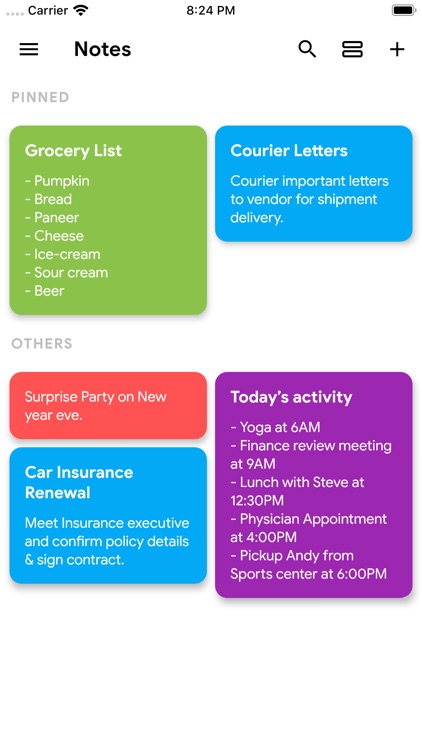

Quick Notes is a simple and awesome notepad app. It gives you a quick and simple notepad editing experience when you write notes, memos, e-mails, messages, shopping lists and to-do lists. Taking notes with Quick Notes is easier than any other notepad or memo pad app. Product Description. Jun 08, 2020 EX6100v2 / EX6150v2 Firmware Version 1.0.1.90. Quick and easy solutions are available for you in the NETGEAR community. Ask the Community. Need to Contact Support?

- Interactive Analysis with the Spark Shell

This tutorial provides a quick introduction to using Spark. We will first introduce the API through Spark’sinteractive shell (in Python or Scala),then show how to write standalone applications in Java, Scala, and Python.See the programming guide for a more complete reference.

To follow along with this guide, first download a packaged release of Spark from theSpark website. Since we won’t be using HDFS,you can download a package for any version of Hadoop.

Basics

Spark’s shell provides a simple way to learn the API, as well as a powerful tool to analyze data interactively.It is available in either Scala (which runs on the Java VM and is thus a good way to use existing Java libraries)or Python. Start it by running the following in the Spark directory:

Spark’s primary abstraction is a distributed collection of items called a Resilient Distributed Dataset (RDD). RDDs can be created from Hadoop InputFormats (such as HDFS files) or by transforming other RDDs. Let’s make a new RDD from the text of the README file in the Spark source directory:

RDDs have actions, which return values, and transformations, which return pointers to new RDDs. Let’s start with a few actions:

Now let’s use a transformation. We will use the filter transformation to return a new RDD with a subset of the items in the file.

We can chain together transformations and actions:

Spark’s primary abstraction is a distributed collection of items called a Resilient Distributed Dataset (RDD). RDDs can be created from Hadoop InputFormats (such as HDFS files) or by transforming other RDDs. Let’s make a new RDD from the text of the README file in the Spark source directory:

RDDs have actions, which return values, and transformations, which return pointers to new RDDs. Let’s start with a few actions:

Now let’s use a transformation. We will use the filter transformation to return a new RDD with a subset of the items in the file.

We can chain together transformations and actions:

More on RDD Operations

RDD actions and transformations can be used for more complex computations. Let’s say we want to find the line with the most words:

This first maps a line to an integer value, creating a new RDD. reduce is called on that RDD to find the largest line count. The arguments to map and reduce are Scala function literals (closures), and can use any language feature or Scala/Java library. For example, we can easily call functions declared elsewhere. We’ll use Math.max() function to make this code easier to understand:

One common data flow pattern is MapReduce, as popularized by Hadoop. Spark can implement MapReduce flows easily:

Here, we combined the flatMap, map and reduceByKey transformations to compute the per-word counts in the file as an RDD of (String, Int) pairs. To collect the word counts in our shell, we can use the collect action:

This first maps a line to an integer value, creating a new RDD. reduce is called on that RDD to find the largest line count. The arguments to map and reduce are Python anonymous functions (lambdas),but we can also pass any top-level Python function we want.For example, we’ll define a max function to make this code easier to understand:

One common data flow pattern is MapReduce, as popularized by Hadoop. Spark can implement MapReduce flows easily:

Here, we combined the flatMap, map and reduceByKey transformations to compute the per-word counts in the file as an RDD of (string, int) pairs. To collect the word counts in our shell, we can use the collect action:

Caching

Spark also supports pulling data sets into a cluster-wide in-memory cache. This is very useful when data is accessed repeatedly, such as when querying a small “hot” dataset or when running an iterative algorithm like PageRank. As a simple example, let’s mark our linesWithSpark dataset to be cached:

It may seem silly to use Spark to explore and cache a 100-line text file. The interesting part isthat these same functions can be used on very large data sets, even when they are striped acrosstens or hundreds of nodes. You can also do this interactively by connecting bin/spark-shell toa cluster, as described in the programming guide.

It may seem silly to use Spark to explore and cache a 100-line text file. The interesting part isthat these same functions can be used on very large data sets, even when they are striped acrosstens or hundreds of nodes. You can also do this interactively by connecting bin/pyspark toa cluster, as described in the programming guide.

Now say we wanted to write a standalone application using the Spark API. We will walk through asimple application in both Scala (with SBT), Java (with Maven), and Python.

We’ll create a very simple Spark application in Scala. So simple, in fact, that it’snamed SimpleApp.scala:

This program just counts the number of lines containing ‘a’ and the number containing ‘b’ in theSpark README. Note that you’ll need to replace YOUR_SPARK_HOME with the location where Spark isinstalled. Unlike the earlier examples with the Spark shell, which initializes its own SparkContext,we initialize a SparkContext as part of the program.

We pass the SparkContext constructor a SparkConfobject which contains information about ourapplication.

Our application depends on the Spark API, so we’ll also include an sbt configuration file, simple.sbt which explains that Spark is a dependency. This file also adds a repository that Spark depends on:

For sbt to work correctly, we’ll need to layout SimpleApp.scala and simple.sbtaccording to the typical directory structure. Once that is in place, we can create a JAR packagecontaining the application’s code, then use the spark-submit script to run our program.

Quick Notes 2019

This example will use Maven to compile an application jar, but any similar build system will work.

We’ll create a very simple Spark application, SimpleApp.java:

This program just counts the number of lines containing ‘a’ and the number containing ‘b’ in a textfile. Note that you’ll need to replace YOUR_SPARK_HOME with the location where Spark is installed.As with the Scala example, we initialize a SparkContext, though we use the specialJavaSparkContext class to get a Java-friendly one. We also create RDDs (represented byJavaRDD) and run transformations on them. Finally, we pass functions to Spark by creating classesthat extend spark.api.java.function.Function. TheSpark programming guide describes these differences in more detail.

To build the program, we also write a Maven pom.xml file that lists Spark as a dependency.Note that Spark artifacts are tagged with a Scala version.

We lay out these files according to the canonical Maven directory structure:

Now, we can package the application using Maven and execute it with ./bin/spark-submit.

Now we will show how to write a standalone application using the Python API (PySpark).

As an example, we’ll create a simple Spark application, SimpleApp.py:

This program just counts the number of lines containing ‘a’ and the number containing ‘b’ in atext file.Note that you’ll need to replace YOUR_SPARK_HOME with the location where Spark is installed.As with the Scala and Java examples, we use a SparkContext to create RDDs.We can pass Python functions to Spark, which are automatically serialized along with any variablesthat they reference.For applications that use custom classes or third-party libraries, we can also add codedependencies to spark-submit through its --py-files argument by packaging them into a.zip file (see spark-submit --help for details).SimpleApp is simple enough that we do not need to specify any code dependencies.

We can run this application using the bin/spark-submit script:

Congratulations on running your first Spark application!

- For an in-depth overview of the API, start with the Spark programming guide,or see “Programming Guides” menu for other components.

- For running applications on a cluster, head to the deployment overview.

- Finally, Spark includes several samples in the

examplesdirectory(Scala, Java, Python).You can run them as follows:

Quick Notes 2013

Please download file HERE!

NOTE:ProPlex IQ Tester LV running any previous firmware versions prior to v0.9.8 require Bootloader Update to prepare for newer versions of firmware. You can find Update instructions HERE

What's New in Version 1.0.1:

NETWORKING

Free Quick Notes Download

- Packet Lister features freezing and touch sensitive scrolling

- New ping sender and responder mode

- New Ethernet Link Layer Discovery Protocol (LLDP) detection

- Expanded protocol list to discover and support more industry protocols

- Expanded PoE test results - now detects Passive PoE, PoE (802.3af) and PoE+ (802.3at) as well as A and B wiring mode

Spark’s shell provides a simple way to learn the API, as well as a powerful tool to analyze data interactively.It is available in either Scala (which runs on the Java VM and is thus a good way to use existing Java libraries)or Python. Start it by running the following in the Spark directory:

Spark’s primary abstraction is a distributed collection of items called a Resilient Distributed Dataset (RDD). RDDs can be created from Hadoop InputFormats (such as HDFS files) or by transforming other RDDs. Let’s make a new RDD from the text of the README file in the Spark source directory:

RDDs have actions, which return values, and transformations, which return pointers to new RDDs. Let’s start with a few actions:

Now let’s use a transformation. We will use the filter transformation to return a new RDD with a subset of the items in the file.

We can chain together transformations and actions:

Spark’s primary abstraction is a distributed collection of items called a Resilient Distributed Dataset (RDD). RDDs can be created from Hadoop InputFormats (such as HDFS files) or by transforming other RDDs. Let’s make a new RDD from the text of the README file in the Spark source directory:

RDDs have actions, which return values, and transformations, which return pointers to new RDDs. Let’s start with a few actions:

Now let’s use a transformation. We will use the filter transformation to return a new RDD with a subset of the items in the file.

We can chain together transformations and actions:

More on RDD Operations

RDD actions and transformations can be used for more complex computations. Let’s say we want to find the line with the most words:

This first maps a line to an integer value, creating a new RDD. reduce is called on that RDD to find the largest line count. The arguments to map and reduce are Scala function literals (closures), and can use any language feature or Scala/Java library. For example, we can easily call functions declared elsewhere. We’ll use Math.max() function to make this code easier to understand:

One common data flow pattern is MapReduce, as popularized by Hadoop. Spark can implement MapReduce flows easily:

Here, we combined the flatMap, map and reduceByKey transformations to compute the per-word counts in the file as an RDD of (String, Int) pairs. To collect the word counts in our shell, we can use the collect action:

This first maps a line to an integer value, creating a new RDD. reduce is called on that RDD to find the largest line count. The arguments to map and reduce are Python anonymous functions (lambdas),but we can also pass any top-level Python function we want.For example, we’ll define a max function to make this code easier to understand:

One common data flow pattern is MapReduce, as popularized by Hadoop. Spark can implement MapReduce flows easily:

Here, we combined the flatMap, map and reduceByKey transformations to compute the per-word counts in the file as an RDD of (string, int) pairs. To collect the word counts in our shell, we can use the collect action:

Caching

Spark also supports pulling data sets into a cluster-wide in-memory cache. This is very useful when data is accessed repeatedly, such as when querying a small “hot” dataset or when running an iterative algorithm like PageRank. As a simple example, let’s mark our linesWithSpark dataset to be cached:

It may seem silly to use Spark to explore and cache a 100-line text file. The interesting part isthat these same functions can be used on very large data sets, even when they are striped acrosstens or hundreds of nodes. You can also do this interactively by connecting bin/spark-shell toa cluster, as described in the programming guide.

It may seem silly to use Spark to explore and cache a 100-line text file. The interesting part isthat these same functions can be used on very large data sets, even when they are striped acrosstens or hundreds of nodes. You can also do this interactively by connecting bin/pyspark toa cluster, as described in the programming guide.

Now say we wanted to write a standalone application using the Spark API. We will walk through asimple application in both Scala (with SBT), Java (with Maven), and Python.

We’ll create a very simple Spark application in Scala. So simple, in fact, that it’snamed SimpleApp.scala:

This program just counts the number of lines containing ‘a’ and the number containing ‘b’ in theSpark README. Note that you’ll need to replace YOUR_SPARK_HOME with the location where Spark isinstalled. Unlike the earlier examples with the Spark shell, which initializes its own SparkContext,we initialize a SparkContext as part of the program.

We pass the SparkContext constructor a SparkConfobject which contains information about ourapplication.

Our application depends on the Spark API, so we’ll also include an sbt configuration file, simple.sbt which explains that Spark is a dependency. This file also adds a repository that Spark depends on:

For sbt to work correctly, we’ll need to layout SimpleApp.scala and simple.sbtaccording to the typical directory structure. Once that is in place, we can create a JAR packagecontaining the application’s code, then use the spark-submit script to run our program.

Quick Notes 2019

This example will use Maven to compile an application jar, but any similar build system will work.

We’ll create a very simple Spark application, SimpleApp.java:

This program just counts the number of lines containing ‘a’ and the number containing ‘b’ in a textfile. Note that you’ll need to replace YOUR_SPARK_HOME with the location where Spark is installed.As with the Scala example, we initialize a SparkContext, though we use the specialJavaSparkContext class to get a Java-friendly one. We also create RDDs (represented byJavaRDD) and run transformations on them. Finally, we pass functions to Spark by creating classesthat extend spark.api.java.function.Function. TheSpark programming guide describes these differences in more detail.

To build the program, we also write a Maven pom.xml file that lists Spark as a dependency.Note that Spark artifacts are tagged with a Scala version.

We lay out these files according to the canonical Maven directory structure:

Now, we can package the application using Maven and execute it with ./bin/spark-submit.

Now we will show how to write a standalone application using the Python API (PySpark).

As an example, we’ll create a simple Spark application, SimpleApp.py:

This program just counts the number of lines containing ‘a’ and the number containing ‘b’ in atext file.Note that you’ll need to replace YOUR_SPARK_HOME with the location where Spark is installed.As with the Scala and Java examples, we use a SparkContext to create RDDs.We can pass Python functions to Spark, which are automatically serialized along with any variablesthat they reference.For applications that use custom classes or third-party libraries, we can also add codedependencies to spark-submit through its --py-files argument by packaging them into a.zip file (see spark-submit --help for details).SimpleApp is simple enough that we do not need to specify any code dependencies.

We can run this application using the bin/spark-submit script:

Congratulations on running your first Spark application!

- For an in-depth overview of the API, start with the Spark programming guide,or see “Programming Guides” menu for other components.

- For running applications on a cluster, head to the deployment overview.

- Finally, Spark includes several samples in the

examplesdirectory(Scala, Java, Python).You can run them as follows:

Quick Notes 2013

Please download file HERE!

NOTE:ProPlex IQ Tester LV running any previous firmware versions prior to v0.9.8 require Bootloader Update to prepare for newer versions of firmware. You can find Update instructions HERE

What's New in Version 1.0.1:

NETWORKING

Free Quick Notes Download

- Packet Lister features freezing and touch sensitive scrolling

- New ping sender and responder mode

- New Ethernet Link Layer Discovery Protocol (LLDP) detection

- Expanded protocol list to discover and support more industry protocols

- Expanded PoE test results - now detects Passive PoE, PoE (802.3af) and PoE+ (802.3at) as well as A and B wiring mode

- Timecode can be sent or received on both XLR ports

- LTC signal scope now viewable in timecode receiver window

- Added RDM supported PID’s covering most of E1.20 and E1.37-1

- New RDM “Raw PID” mode allows custom GET and SET messages

- New DMX transmitter interface with multiple sources available for sending and editing DMX values

- Virtual touch responsive faders arranged for RGB, RGBW, 4 channel or 6 channel

- Added description for DMX Receiver Configuration universe range - it is used to set ArtPollReply and sACN multicast join range

- DMX Output Port now defaults to Disabled on powerup

- Added DMX Receiver sACN multicast join enable/disable toggle

- Optional numeric CMD keypad for direct DMX value entry

- DMX Effect Engine with editable waveform, BPM, channel selection and more

- Full Universe Scene Editor to capture external DMX source and playback of saved scenes

- Art-Net and sACN output now able to output universe ranges

- New DMX receiver interface with all universes viewable in the same window

- Additional display modes for viewing RGB and RGBW data

- Restructured user interface with improved functionality, unified style for all windows and more intuitive navigation

- Editable fields have popup windows for data entry

- Listed items can now be scrolled with a swipe touch gesture

- Touchscreen is more responsive

- New bootloader for IQ Tester – future firmware updates for units now require only USB cable connection and firmware file

- Added Firmware utility - can now update firmware of Solaris fixtures

- Firmware Files for Solaris fixtures are copied into IQ Tester internal memory via USB cable transfer

- New charging and power on/off behavior - unit will stay off when charger is connected and can be turned on/off at any time by holding central keypad button

- New automatic shutdown with configurable timeout